The Operating Pressure

Agent-assisted work feels fast when the session is fresh and the repo is small. The weakness appears later. Once the context window resets, the next session has to reconstruct what the system does, what changed, which decisions were already made, and what still remains open.

That repeated recovery cost is usually larger than the model gap people blame. The model can still reason. It just no longer has the operating history.

Why Chat History Is Not Memory

Chat is useful for interaction, but it is a weak place to store durable project state. It is hard to search, easy to interrupt, and disconnected from the repo, the task system, and the decisions that shaped the work. The result is that each new session starts by re-explaining what should already be part of the environment.

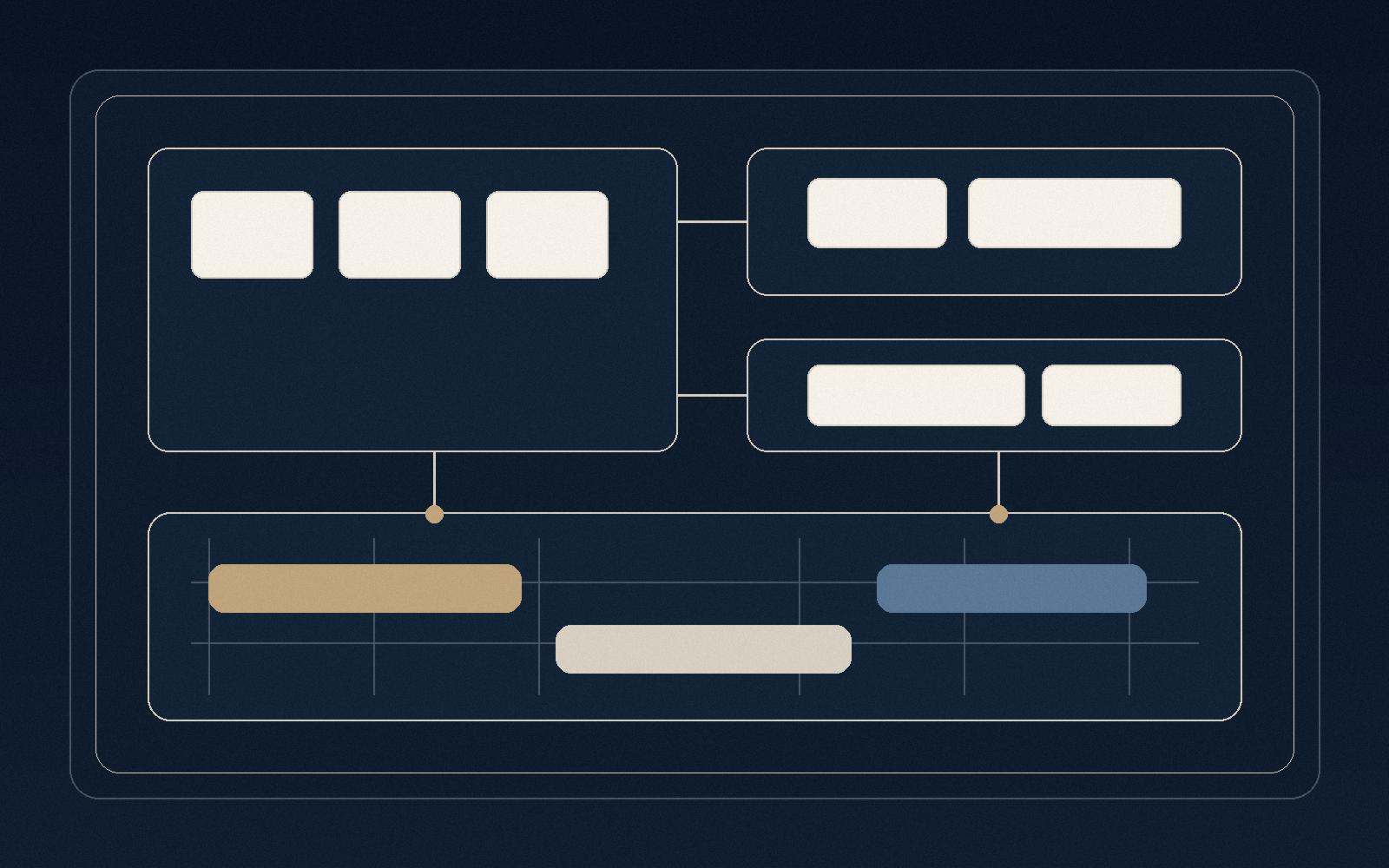

What Durable Memory Actually Includes

The fix is not “save everything.” The fix is to preserve the parts that make the next correct action recoverable. That usually means canonical repo docs, a durable task queue, explicit decision records, handoff notes, and a way to inspect open loops without replaying the whole project from scratch.

How the System Behaves Differently

Once that memory layer exists, an agent can re-enter the repo, confirm the current task, inspect prior decisions, and keep moving with much less drift. Humans benefit too, because the operating record explains why the work changed direction instead of forcing everyone to infer intent from fragments.

Where It Pays Off

This matters most in codebases, SEO programs, operations tooling, documentation systems, and any environment where work compounds across many sessions. The more layered the context, the more expensive statelessness becomes.

The Decision Rule

If a project needs to survive interruption, handoff, and repeated re-entry, memory has to live in the system itself. That is the difference between using AI as a clever helper and using it as durable operational infrastructure.